Machine Vision Inspection Systems: How to Account for Big Defects and Minor Ones Too

There’s an old saying: Stuff happens. When it comes to a converting production line, “stuff” manifests in the form of defects, such as:

- Parts not assembled to specification

- Incorrect materials being added to parts

- Poor print quality on parts

- Incorrect labels applied

- Parts not assembled correctly

Machine vision inspection systems were created to help avoid these defects and improve your overall quality.

Yet to really make vision system technology work, you have to imagine the worst-case scenario and the minor defects too.

What are machine vision Inspection systems?

Machine vision systems use smart-camera technology to detect production-line errors, often ones undetectable by the human eye. They are typically integrated with a machine’s PLC, automating quality control.

Machine vision systems have four main applications, called GIGI.

GIGI: The Four Key Machine Vision Applications

John Keating, Director of Product Marketing with Cognex, notes that there four key machine vision applications — identified by the acronym (GIGI). GIGI stands for Guide, Identify, Gauge and Inspect. (Be sure to listen to our audio interview later in this post with John, and check out Cognex’s white paper: Introduction to Machine Vision.)

Let’s break down the details:

1. Guide

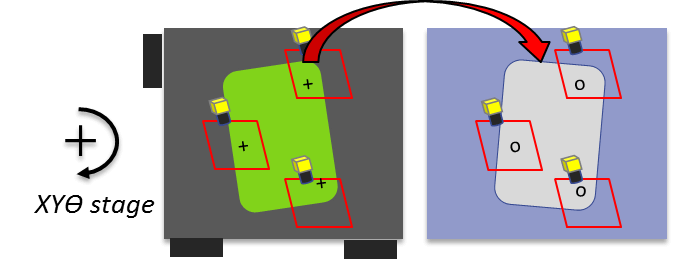

The most critical application is “Guide,” which involves finding the part. If you can find the part, you can inspect it and measure it. You want to find a location, and then tell something — typically a robotic guidance mechanism — to go to that position.

- Automates production

- Provides flexibility

- Improves quality and yield

Guide Example:

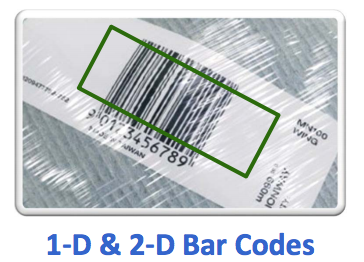

2. Identify

The second most prominent application is “identifying” something. This can involve reading a barcode, or recognizing which shape and/or color is present. It can be human readable text, such as the date code on a product, and uses a technology known as optical character recognition (OCR).

- Reads codes or characters, or identifies by color, shape or assembly

- Controls material flow, enables traceability, collects essential data

Identify Example:

3. Gauge

In this application, you’re taking measurements of a product. In effect, you’re doing what Keating refers to as a reverse CAD approach.

With CAD, you have a measurement in mind, and you use it to create a drawing. The reverse uses the machine visions technology to “extract” a measurement, and then ensures it’s the correct measurement.

- Precise, fast, non-contact measurement

Gauge Example:

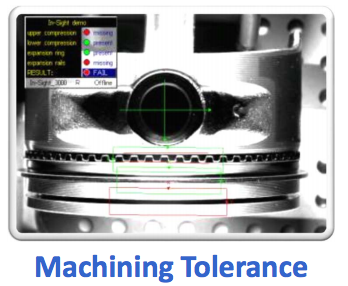

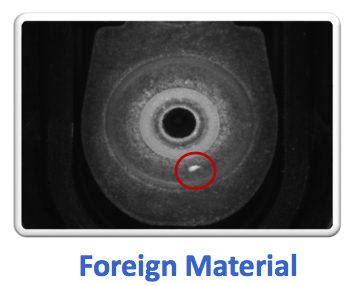

4. Inspect

With a vision system inspection, you’re performing a 100% vision inspection to check for surface defects, malformed plastic parts, printer imperfections, and other types of defects.

- Completeness

- Correct location

- Quality

- Process control

Inspect Example:

With each of these applications, the task of the machine vision system is to make the manufacturing process more efficient, reduce waste, and increase your throughput.

When Do Companies Opt for a Vision Inspection?

Integrating computer vision technology into your converting process usually is an evolutionary process.

To determine if you even need vision machine technology, Todd Kruse of DeltaModTech recommends identifying your critical processes, and where vision technology might be useful.

“Is it to qualify the process, or is it inspect incoming materials?” Kruse asks.

Most young companies aren’t proactive in their use of vision inspections systems, Keating notes. For most companies, pursuing machine vision is reactionary. They have a big problem that needs to be fixed.

If there is an existing machine and a problem occurs, people will install the technology after the production has begun. Eventually, “it will creep up the line, moving upstream to eliminate problems earlier in the process,” Keating said.

More mature companies will eventually integrate machine vision system into their machine design. Once companies use the technology, Keating said, they will start to wonder, “Now where else do we have a problem?”

One thing missing, particularly with GIGI, is having robust, reliable vision tools. Without those, and a flexible configuration interface, the system is not going to work well.

The Key to an Effective Vision Imaging: LIGHTING

The difference-maker with machine-vision cameras? Here’s a clue: LIGHTS, camera, action.

That statement applies to your industrial vision systems as much as it does to Hollywood – particularly the lighting.

It’s a simple concept: You’re trying to spot a defect with your machine vision camera. If you get a bad image, you won’t be able to spot the problem. However, if properly lit, the defect will be highlighted — particularly in ways that might not be visible to the naked eye.

Here are some lighting methods to get the most out of your machine vision camera:

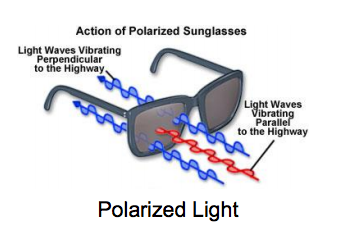

Polarized Lighting

Polarized light cuts down lighting coming from other directions.

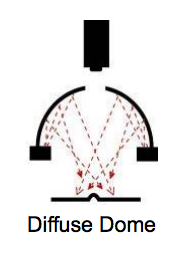

Diffuse Dome Lighting

Any photographer will tell you that a cloudy day is a perfect time to take pictures. Clouds diffuse the light, so you don’t get hot spots of light. This is an ideal application for a curved part.

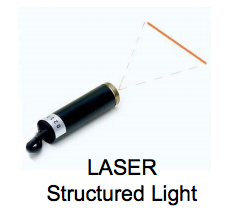

Laser Structured Lighting

Lasers can emit a straight line. If there is a change in height, the line will bend. You should have a repeatable shape when using laser. If there is a difference in how the laser light bends compared to the way it bends on a good part, then that is indicative of a defect.

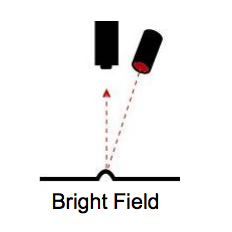

Bright Field Lighting

Light is used to illuminate a defect. For example, your part has a smooth surface. This is a very common way to provide consistent lighting on matte surfaces.

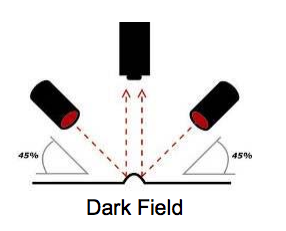

Dark Field Lighting

A light shines at a very low angle at a part. What you’re trying to do is highlight a height or a depth. It’s like when you hold a piece of scratched glass up a light and rotate it to spot the mark. You’re created a dark field effect so you can see the shadow.

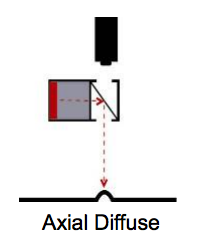

Axial Diffuse Lighting

Also known as diffuse on-axis lighting. This approach allows the light to bounce off of a half mirror, with the lighting coming from the optical center of the lens. This is useful for reflective parts, rounded parts, or parts that need light to shine into holes or crevices in the part.

Keating notes that you determine which techniques will be used during the initial setup and trial run.

Even with great lighting, vision won’t work successfully unless you have a full vision toolkit and a flexible configuration interface to handle the “what-ifs.” Reliable, flexible tools help ensure a successful deployment.

What’s important is that the lighting you choose shouldn’t just address the big problems. “It’s important to look at the marginal issues,” Keating said. “It’s easy to find the good and the bad, but what about the ones on the edge?”

We’ll get to how these marginal defects impact for the entire system, but next, let’s look at how to deploy a machine vision system.

Best Practices for Deploying Your Machine Vision System

We’ve detailed the applications of a machine vision system (GIGI) and the secret sauce that makes it work (optimal lighting.) But what are the stages to deploy a system in a way that delivers maximum benefit to the bottom line?

No company wants a system that doesn’t pay for itself. So let’s look at how the stages of deployment ensure your vision system delivers plenty of bang for your buck.

Stage 1 – Feasibility

To this point, everything you’ve read in this post centers on feasibility. Feasibility is all about doing the upfront work, which involves assessing the accuracy the system can achieve, and the requirements it needs to operate.

It also includes analyzing the timing of the system. Can you perform the tests without slowing down the production line? Is the resolution of the problem in line with your requirements? All this needs to be done upfront, before you move on to the application development.

Stage 2 – Application Development

Now that your team has done the theoretical work, it’s time to put your machine vision system into practice and tie it into your production line.

You’ll be integrating the system with your PLC and determining what actions to take when the defective part reaches the production station. Application development and feasibility are the two most important stages of your deployment.

Stage 3 – Commissioning

Now it’s time to go live, and integrate the machine vision software into your production line. Once the system is up and running, you’ll be checking to see if the system is doing its job on an ongoing basis. You’ll likely be adjusting settings once operation begins.

Stage 4 – Maintaining

The maintenance stage involves monitoring the ongoing performance of the system. Here’s where you can integrate some quality controls into the system, perhaps reviewing the material recorded by the machine vision system.

There may also be simple equipment maintenance, such as cleaning the lens every day.

Keating points out that if you perform stages 1 and 2 correctly, then 3 and 4 become easy.

Compliance and Security – 21CFR Part 11

Compliance and security comes into play for medical equipment and pharmaceutical manufacturers. Are you in compliance with 21CFR Part 11? Are there requirements for password protection and documenting who logged in and what they did?

Machine vision systems play a major role in adhering to these standards and regulations. As the technology becomes more robust, you can count on that role of expanding.

Can Your Machine Vision Inspection System Tackle the Major Defects and the Minor Ones too?

You want to use a machine vision system to monitor those critical processes, as Kruse noted. But Keating also stresses tracking minor defects, or “marginal errors,” as he refers to them.

Marginal errors don’t tend to be the obvious glitches in production. They’re actually the minor variations; the worst-case scenarios to always follow Murphy’s Law.

For example, what if you have to switch vendors and the part now has a slightly different surface, and the light reflects off it in a different way? Can your vision system accommodate that kind of change?

“It’s easy to find the best parts and the worst parts – but it’s planning for reality that’s the challenge,” he notes.

Use your machine vision system so it can accommodate the parts on the “hairy edge of passing or failing.” When you use the technology to monitor the critical processes and plan for the minor defects, you’ll get the biggest ROI impact for your machine vision system.

To learn more about implementing machine vision systems, contact us online or call 1-763-755-7744.